The Core Concept - Brains and Hands

In our previous labs, we saw how powerful Large Language Models (LLMs) are, but we also identified a major weakness: they are locked in a box. They only know what they were trained on and cannot interact with the live world.

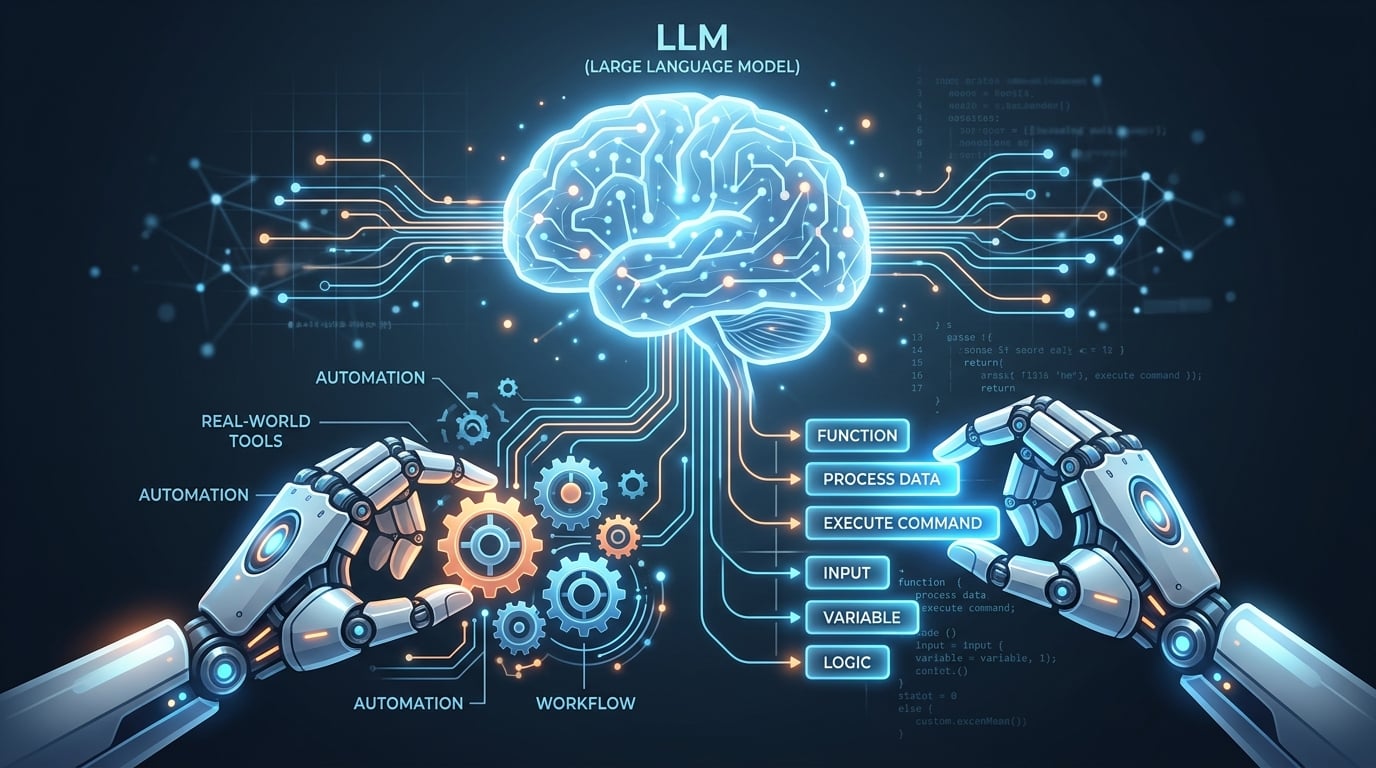

AI Agents solve this problem by combining two distinct components:

- The Brain: The LLM (like Qwen3-4B), which understands human language, handles logic, and maintains conversation context.

- The Hands: Python code and external tools (APIs) that can actively interact with the real world—such as checking the weather, browsing the internet, or running calculations.

Teaching the Brain to Use Hands

An LLM cannot naturally "click" buttons or "run" Python. Today, our goal is to teach the Brain how to recognize when it needs help, and format its output so our Python program can run the tool for it.